ProxySQL's prepared statement cache refactor, explained

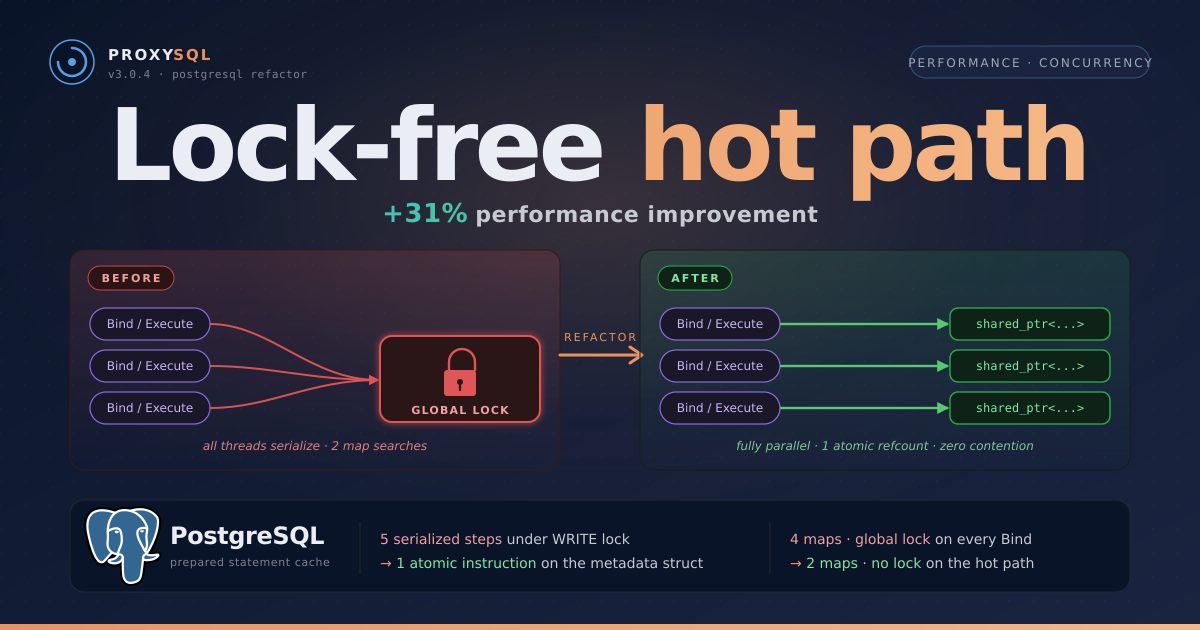

TL;DR — ProxySQL 3.0.4 made PostgreSQL prepared statements 18–31% faster, scaling with client count. The refactor drops the global lock from the hot path and collapses refcounting into a single atomic instruction.

The bottleneck

ProxySQL sits between application clients and PostgreSQL backends. When clients use prepared statements through the Extended Query protocol (Parse, Bind, Describe, Execute, Close), every one of those operations needs statement metadata: parameter types, query hash, backend-side IDs.

To share metadata across connections, ProxySQL kept a global cache behind a read-write lock. Every worker thread hit that lock on every prepared-statement operation — the obvious bottleneck under concurrency. Less obvious but just as costly: the work done under the lock. Every Bind, Describe, and Execute performed an O(log n) search through the global map to convert an ID into a pointer — work that grew as the cache grew, and that the session didn’t actually need to do. And refcounting was worse still: every increment or decrement took the write lock, not just a read lock, briefly freezing every other thread out of the cache entirely.

How the old design worked

This design was modeled on ProxySQL’s MySQL prepared statement cache, which uses the same pattern.

The initial implementation had three layers. Each client connection kept a local map (stmt_name_to_global_ids) from statement names to integer IDs, plus a reverse multimap (global_id_to_stmt_names) for cleanup. Each backend connection had a similar pair. And then there was the global manager with two maps: map_stmt_id_to_info mapping IDs to metadata pointers, and map_stmt_hash_to_info mapping query hashes to the same pointers. All protected by a single global read-write lock.

So when a Bind, Describe, or Execute came in, the code would: search the local map to get an integer ID, then acquire the global read lock, then search the global map_stmt_id_to_info to get the actual metadata pointer, then release the lock. Two map searches, one lock acquisition, one lock release. On every operation, from every thread. The lock was bad, but the redundant global map search was arguably worse. Even with zero contention, we were doing an O(log n) tree traversal on the global map for every single Bind, Describe, and Execute, just to convert an ID we already had into a pointer we actually needed.

%%{init: {'theme':'base','themeVariables':{'fontFamily':'ui-sans-serif, -apple-system, Segoe UI, sans-serif','fontSize':'14px','primaryColor':'#ffffff','primaryTextColor':'#1f2430','primaryBorderColor':'#cbd0dc','lineColor':'#8a8e9a'},'flowchart':{'nodeSpacing':30,'rankSpacing':45,'padding':10,'curve':'basis'}}}%%

flowchart TD

A(["Client A<br/>Bind / Execute / ..."]):::client

B(["Client B<br/>Bind / Execute / ..."]):::client

C(["Client N<br/>Bind / Execute / ..."]):::client

A --> A1["Search #1: Local map<br/>name → integer ID<br/><i>Just an integer!</i>"]:::local

B --> B1["Search #1: Local map<br/>name → integer ID<br/><i>Just an integer!</i>"]:::local

C --> C1["Search #1: Local map<br/>name → integer ID<br/><i>Just an integer!</i>"]:::local

subgraph GL["Before — global lock region, hot-path bottleneck"]

direction TB

L["Acquire global read lock<br/><i>Cache-line contention; readers stall on writer</i>"]:::lock

S2["Search #2: Global map_stmt_id_to_info<br/>O(log n) tree traversal to convert ID → pointer<br/><i>Redundant work</i>"]:::warn

U["Use metadata pointer • Release lock"]:::lock

L --> S2 --> U

end

A1 --> L

B1 --> L

C1 --> L

U --> Send["Build request, send to backend<br/><i>Finally proceed</i>"]:::neutral

Cost["<div style='width:540px'><b>Per operation:</b> 2 map searches + 1 lock acquire/release<br/><b>Per refcount change:</b> write lock + search + modify + potential inline purge</div>"]:::infoRedBox

Send ~~~ Cost

linkStyle 5,6,7 stroke:#d44a4a,stroke-width:2px

classDef client fill:#efe8ff,stroke:#8a6ce0,stroke-width:1.5px,color:#4a3a80

classDef local fill:#e6f1fc,stroke:#5aa0e0,stroke-width:1px,color:#2a5578

classDef lock fill:#fcdede,stroke:#d44a4a,stroke-width:1.8px,color:#7a1f1f

classDef warn fill:#fdecd2,stroke:#d88a40,stroke-width:1.5px,color:#6f430f

classDef neutral fill:#f3f4f7,stroke:#8a8e9a,stroke-width:1px,color:#3a3e48

classDef infoRedBox fill:#fdf0f0,stroke:#a04040,stroke-width:1.2px,stroke-dasharray:5 3,color:#702020

style GL fill:#fff6f6,stroke:#c84848,stroke-width:1.5px,stroke-dasharray:5 4,color:#802020Before — every Bind, Describe, Execute, and Close required two map searches (local + global) and a lock. The global search was pure overhead.

The integer-ID approach makes total sense when you first build something like this. You don’t want your local maps tightly coupled to the global store, so you use IDs as an abstraction layer. It’s clean, it’s flexible. But as we benchmarked under higher concurrency, two costs became clear. First, the lock: every Bind, Describe, and Execute had to acquire the global lock just to read metadata, and even read locks cause cache-line bouncing under contention. Second, the search: every operation performed an O(log n) tree traversal on the global map_stmt_id_to_info just to convert an integer into a pointer. That search was wasted work. The local map already knew which statement we needed. It was storing an ID instead of the answer.

What changed in the refactor

If the local map already maps a statement name to something, why map it to an integer you then have to look up again?

That realization pointed to the fix: change what the local map stores. Instead of an integer ID, store a std::shared_ptr<const PgSQL_STMT_Global_info> directly. The local map no longer points to an ID that you have to resolve. It points to the actual metadata.

| Before | After |

|---|---|

| ✕ Local map stores integer IDs | ✓ Local map stores shared_ptr directly |

| ✕ Two map searches per operation (local + global) | ✓ One map search per operation (local only) |

| ✕ Global lock on every hot-path operation | ✓ Zero locks on hot path |

| ✕ Refcount: write lock → search → modify → inline purge → unlock | ✓ Refcount: one atomic instruction, purge separated |

| ✕ Four maps (2 client-side local + 2 global) | ✓ Two maps (1 client-side local + 1 global) |

| ✕ Manual memory management (raw pointers, manual delete) | ✓ Automatic lifetime via shared_ptr |

%%{init: {'theme':'base','themeVariables':{'fontFamily':'ui-sans-serif, -apple-system, Segoe UI, sans-serif','fontSize':'14px','primaryColor':'#ffffff','primaryTextColor':'#1f2430','primaryBorderColor':'#cbd0dc','lineColor':'#8a8e9a'},'flowchart':{'nodeSpacing':40,'rankSpacing':40,'padding':10,'curve':'basis'}}}%%

flowchart TD

subgraph Threads["After — session-local, no locks, fully parallel"]

direction LR

A(["Client A<br/>Bind / Execute / ..."]):::client

B(["Client B<br/>Bind / Execute / ..."]):::client

C(["Client N<br/>Bind / Execute / ..."]):::client

A --> A1["Local map lookup<br/>name → shared_ptr<metadata>"]:::local

B --> B1["Local map lookup<br/>name → shared_ptr<metadata>"]:::local

C --> C1["Local map lookup<br/>name → shared_ptr<metadata>"]:::local

A1 --> A2["Direct metadata access<br/>No lock • No global map"]:::good

B1 --> B2["Direct metadata access<br/>No lock • No global map"]:::good

C1 --> C2["Direct metadata access<br/>No lock • No global map"]:::good

A2 --> A3[/"Send to backend"/]:::neutral

B2 --> B3[/"Send to backend"/]:::neutral

C2 --> C3[/"Send to backend"/]:::neutral

end

Note["<div style='width:540px'>Global map still exists — used only during <b>Parse</b><br/>(first time a query is prepared)</div>"]:::info

B3 ~~~ Note

linkStyle 3,4,5 stroke:#2fa25a,stroke-width:2px

linkStyle 6,7,8 stroke:#2fa25a,stroke-width:2px

classDef client fill:#efe8ff,stroke:#8a6ce0,stroke-width:1.5px,color:#4a3a80

classDef local fill:#e6f1fc,stroke:#5aa0e0,stroke-width:1px,color:#2a5578

classDef good fill:#dff3e4,stroke:#2fa25a,stroke-width:1.8px,color:#1d5a34

classDef neutral fill:#f3f4f7,stroke:#8a8e9a,stroke-width:1px,color:#3a3e48

classDef info fill:#f8f9fc,stroke:#a0a4b0,stroke-width:1px,stroke-dasharray:5 3,color:#5a5e6a

style Threads fill:#f4fbf6,stroke:#2fa25a,stroke-width:1.5px,color:#1d5a34After — each thread resolves metadata from its own local map via shared_ptr. Zero global synchronization on the hot path.

Why shared_ptr?

Once the local map stores metadata directly instead of an integer ID, something has to keep that metadata alive. The object is shared: the global cache holds a reference, every client session that prepared the statement holds a reference, every backend session holds a reference. We needed an ownership model that said: “live as long as any holder still needs you.”

That is exactly what std::shared_ptr<const PgSQL_STMT_Global_info> gives us. Every map entry that holds the pointer increments a reference count. When the last holder drops its pointer, the object is destroyed automatically. No manual new/delete, no risk of one session freeing memory another session is still using.

The const qualifier matters too. Once a prepared statement’s metadata is created, its query text and parameter types never change. const makes that contract explicit — and makes it safe for many threads to read the same object concurrently, no synchronization needed.

What is special about Parse?

Parse is the one place we still need the global map. You prepare a statement once, then execute it many times, so this is the cold path. But we added a local fast path that handles the most common case without touching the global map at all.

%%{init: {'theme':'base','themeVariables':{'fontFamily':'ui-sans-serif, -apple-system, Segoe UI, sans-serif','fontSize':'14px','primaryColor':'#ffffff','primaryTextColor':'#1f2430','primaryBorderColor':'#cbd0dc','lineColor':'#8a8e9a'},'flowchart':{'nodeSpacing':35,'rankSpacing':40,'padding':10,'curve':'basis'}}}%%

flowchart TD

Start(["Parse message arrives"]):::start

Hash["Compute query hash (SpookyHash)"]:::neutral

Start --> Hash

subgraph T1Box["Tier 1: Local fast path"]

direction LR

T1{{"Does local map already have this hash?<br/>→ Reuse immediately"}}:::good

Done1(["<b>Done!</b><br/>No lock, no I/O"]):::goodExit

T1 -- hit --> Done1

end

subgraph T2Box["Tier 2: Global cache lookup"]

direction LR

T2{{"Another session prepared this?<br/>→ Link local map to it"}}:::blue

Done2(["<b>Done!</b><br/>Read lock only"]):::blueExit

T2 -- hit --> Done2

end

subgraph T3Box["Tier 3: Forward to backend"]

direction TB

T3["First-ever prepare<br/>→ Send to PostgreSQL, cache result"]:::warn

Store["Store in global & local maps"]:::neutral

T3 --> Store

end

Hash --> T1

T1 -- miss --> T2

T2 -- miss --> T3

linkStyle 1 stroke:#2fa25a,stroke-width:2.2px

linkStyle 2 stroke:#3c87c8,stroke-width:2.2px

linkStyle 5 stroke:#d88a40,stroke-width:1.6px,stroke-dasharray:4 3

linkStyle 6 stroke:#d88a40,stroke-width:1.6px,stroke-dasharray:4 3

classDef start fill:#efe8ff,stroke:#8a6ce0,stroke-width:1.5px,color:#4a3a80

classDef neutral fill:#f3f4f7,stroke:#8a8e9a,stroke-width:1px,color:#3a3e48

classDef good fill:#dff3e4,stroke:#2fa25a,stroke-width:1.8px,color:#1d5a34

classDef goodExit fill:#c9ebd3,stroke:#2fa25a,stroke-width:1.8px,color:#0f4a24

classDef blue fill:#e1edfa,stroke:#3c87c8,stroke-width:1.8px,color:#1d3f5a

classDef blueExit fill:#cee0f3,stroke:#3c87c8,stroke-width:1.8px,color:#0f2f4a

classDef warn fill:#fdecd2,stroke:#d88a40,stroke-width:1.5px,color:#6f430f

style T1Box fill:#f4fbf6,stroke:#2fa25a,stroke-width:1.5px,color:#1d5a34

style T2Box fill:#f3f8fd,stroke:#3c87c8,stroke-width:1.5px,color:#1d3f5a

style T3Box fill:#fef7ea,stroke:#d88a40,stroke-width:1.5px,color:#6f430fParse resolves through three tiers. The local fast path (Tier 1) is the common case for unnamed statement re-parses — zero locks, zero network I/O.

This matters a lot in practice. Client libraries like libpq constantly re-parse the unnamed statement ("") for parameterized queries. Without the fast path, every single one of those re-parses hits the global map. With it, the code checks: does the local map already have an entry with a matching hash? If yes, reuse it. No lock, no network round-trip, done.

How references and refcounts fit together

There are actually two refcounting layers working together in the new design:

shared_ptrreference count — C++‘s automatic lifetime primitive. Answers: “Is any pointer to this metadata object still alive?” When the lastshared_ptrdrops, the object is destroyed. This is memory safety.- Atomic

ref_count_clientandref_count_server— application-level counters on the metadata struct. Answer: “How many client and backend connections currently have this statement prepared?” Used for metrics and for deciding when a statement is eligible for purge.

They’re kept in sync — every time a local map stores a shared_ptr, the corresponding atomic refcount is also bumped — but they answer different questions and serve different purposes. The purge logic uses both: it only removes a statement when the shared_ptr use count has dropped to 1 (only the global cache still holds it) and both atomic refcounts are zero (no connection has it prepared). Both checks run under the write lock (Phase 2 below) — that’s what makes use_count() reliable here.

In the old design, incrementing a refcount required acquiring the global lock, searching the map by ID, modifying a plain int, and releasing. All that for refcount++. That was one of the first things we targeted.

Now the refcount lives directly on the metadata struct as mutable std::atomic<uint32_t>. Since the local map already holds a shared_ptr to the struct, bumping the refcount is one atomic instruction on the struct you already have. No lock, no search.

%%{init: {'theme':'base','themeVariables':{'fontFamily':'ui-sans-serif, -apple-system, Segoe UI, sans-serif','fontSize':'14px','primaryColor':'#ffffff','primaryTextColor':'#1f2430','primaryBorderColor':'#cbd0dc','lineColor':'#8a8e9a'},'flowchart':{'nodeSpacing':25,'rankSpacing':25,'padding':8,'curve':'basis'}}}%%

flowchart LR

subgraph OLD ["Before — ref_count_client(stmt_id, +1)"]

direction TB

O1["<div style='width:260px'>1. Acquire WRITE lock</div>"]:::lock

O2["<div style='width:260px'>2. Search map_stmt_id_to_info</div>"]:::lock

O3["<div style='width:260px'>3. Modify plain int refcount</div>"]:::lock

O4["<div style='width:260px'>4. Inline purge (maybe)<br/>Iterate entire map, collect<br/>into vector, delete, all under lock</div>"]:::warn

O5["<div style='width:260px'>5. Release WRITE lock</div>"]:::lock

OX(["<div style='width:240px'>Blocks ALL readers + writers</div>"]):::infoDanger

O1 --> O2 --> O3 --> O4 --> O5

O5 ~~~ OX

end

subgraph NEW ["After — ref_count_client(ptr, +1)"]

direction TB

N1["<div style='width:260px'>Already have shared_ptr<br/>to the metadata struct</div>"]:::local

N2["<div style='width:260px'>atomic fetch_add(1)<br/>directly on the struct</div>"]:::good

N3(["<div style='width:240px'>No lock • No map search • No inline purge</div>"]):::infoGood

N4(["<div style='width:240px'>Purge runs separately, rarely, with pre-check</div>"]):::infoGood

N5(["<div style='width:240px'>Per-thread stats (no contention)</div>"]):::infoGood

N1 --> N2

N2 ~~~ N3

N3 ~~~ N4

N4 ~~~ N5

end

OLD ~~~ NEW

linkStyle 5 stroke:#2fa25a,stroke-width:2px

classDef lock fill:#fcdede,stroke:#d44a4a,stroke-width:1.6px,color:#7a1f1f

classDef warn fill:#fdecd2,stroke:#d88a40,stroke-width:1.5px,color:#6f430f

classDef local fill:#e6f1fc,stroke:#5aa0e0,stroke-width:1px,color:#2a5578

classDef good fill:#dff3e4,stroke:#2fa25a,stroke-width:1.8px,color:#1d5a34

classDef infoDanger fill:#fdeaea,stroke:#a02828,stroke-width:1px,stroke-dasharray:5 3,color:#6a1010

classDef infoGood fill:#eef7f0,stroke:#2fa25a,stroke-width:1px,stroke-dasharray:5 3,color:#1d5a34

style OLD fill:#fff6f6,stroke:#c84848,stroke-width:1.5px,color:#802020

style NEW fill:#f4fbf6,stroke:#2fa25a,stroke-width:1.5px,color:#1d5a34Old: 5 steps under write lock, potentially iterating the entire map. New: one atomic instruction, no lock.

One detail worth calling out: the mutable on those atomics. The metadata struct is shared as shared_ptr<const> so nobody can accidentally modify the query text or parameter types from another thread. But refcounts obviously need to change, hence mutable. Clean separation: the data is frozen, only the bookkeeping is mutable.

We also moved statistics to a per-thread array. The old code updated a single statuses struct behind the global lock. Now each worker thread gets its own slot and just does a plain integer increment. No atomics needed, no contention.

How purge works

When no connection references a statement anymore, it can be removed from the global cache. The purge checks two things: shared_ptr.use_count() == 1 (only the global map holds it, no session has a copy) and both atomic refcounts at zero (no active usage). These aren’t redundant. A connection can hold a shared_ptr without actively using the statement. Both must be zero.

This is also what pins destruction to purge and nowhere else. Sessions can drop their shared_ptr copies freely — each drop just decrements the shared_ptr’s own reference count; it never reaches zero while the global cache is still holding its copy. Because purge is the only operation that removes the cache’s entry, and it only does so when every session has already let go, the cache’s release is always the final one. A statement’s lifetime ends here, by the cache’s decision, not by whichever session happens to close last.

To avoid locking on every refcount change, the purge uses a two-phase approach:

%%{init: {'theme':'base','themeVariables':{'fontFamily':'ui-sans-serif, -apple-system, Segoe UI, sans-serif','fontSize':'14px','primaryColor':'#ffffff','primaryTextColor':'#1f2430','primaryBorderColor':'#cbd0dc','lineColor':'#8a8e9a'},'flowchart':{'nodeSpacing':25,'rankSpacing':40,'padding':10,'curve':'basis'}}}%%

flowchart TB

subgraph PH1["Phase 1: Lock-free pre-check"]

P1["<b>Read atomic zero-count counters</b><br/>Cache exceeded limit?<br/>>10% entries unreferenced?<br/>>1 second since last purge?"]:::good

end

P1 -- all conditions fail --> Skip(["Skip (common)"])

P1 -- maybe --> P2

subgraph PH2["Phase 2: Under write lock"]

P2["Re-verify all conditions<br/>For each candidate:<br/>shared_ptr.use_count() == 1?<br/>Both atomic refcounts == 0?"]:::blue

end

P2 --> Erase["Erase from map, recycle ID<br/>shared_ptr handles deallocation"]

Skip:::goodExit

Erase:::neutral

Insight["<div style='width:540px'>Under normal operation, Phase 1 short-circuits almost every time — the write lock is rarely needed</div>"]:::info

Erase ~~~ Insight

linkStyle 0 stroke:#2fa25a,stroke-width:2px

linkStyle 1 stroke:#d88a40,stroke-width:1.8px,stroke-dasharray:4 3

classDef good fill:#dff3e4,stroke:#2fa25a,stroke-width:1.8px,color:#1d5a34

classDef goodExit fill:#c9ebd3,stroke:#2fa25a,stroke-width:1.8px,color:#0f4a24

classDef blue fill:#e1edfa,stroke:#3c87c8,stroke-width:1.8px,color:#1d3f5a

classDef neutral fill:#f3f4f7,stroke:#8a8e9a,stroke-width:1px,color:#3a3e48

classDef info fill:#f8f9fc,stroke:#a0a4b0,stroke-width:1px,stroke-dasharray:5 3,color:#5a5e6a

style PH1 fill:#f4fbf6,stroke:#2fa25a,stroke-width:1.5px,color:#1d5a34

style PH2 fill:#f3f8fd,stroke:#3c87c8,stroke-width:1.5px,color:#1d3f5aThe pre-check reads two atomic counters and a size — no lock, no iteration. Only when the heuristic trips does the write lock get acquired.

What became simpler?

Removing the indirection let us delete entire data structures. The client-side reverse-lookup multimap inside each session is gone. The ID-based global metadata map (map_stmt_id_to_info) is gone. The lookup function that every hot-path operation used to call is gone. After the refactor, each client connection has one client-side local map instead of two, and the global manager has one map instead of two.

The metadata object itself is now reference-counted through shared_ptr, so manual new/delete calls and the bookkeeping that went with them are gone too. Memory cleanup happens automatically once the last reference goes out of scope.

Net effect: less memory per connection, fewer moving parts to reason about, and the surface area of the cache subsystem is meaningfully smaller.

What do the benchmark numbers show?

We benchmarked with pgbench in prepared query mode:

| ProxySQL threads | pgbench clients | TPS before | TPS after | Improvement | Latency before | Latency after |

|---|---|---|---|---|---|---|

| 1 | 1 | 3,944 | 5,078 | +29% | 4.06 ms | 3.16 ms |

| 1 | 4 | 3,435 | 4,454 | +30% | 4.66 ms | 3.59 ms |

| 1 | 16 | 3,286 | 4,312 | +31% | 4.87 ms | 3.71 ms |

| 2 | 1 | 6,263 | 7,377 | +18% | 2.56 ms | 2.17 ms |

| 2 | 4 | 5,180 | 6,557 | +27% | 3.10 ms | 2.44 ms |

| 2 | 16 | 4,902 | 6,219 | +27% | 3.26 ms | 2.57 ms |

pgbench in prepared query mode, 60-second runs, averaged over two runs.

The pattern is clear: gains are biggest at higher client counts. That’s exactly what you’d expect when you remove lock contention. More threads fighting over the same mutex = more time wasted waiting.

The single-thread numbers are worth noting. Even with no contention at all, there’s a 29% improvement. That’s the redundant global map lookup showing up — the lock wasn’t the only cost, it was just the most visible one. Removing that extra O(log n) search per operation pays off even when there’s no one to contend with.

%%{init: {'theme':'base','themeVariables':{'fontFamily':'ui-sans-serif, -apple-system, Segoe UI, sans-serif','fontSize':'14px','xyChart':{'backgroundColor':'#ffffff','titleColor':'#1f2430','xAxisLabelColor':'#3a3e48','yAxisLabelColor':'#3a3e48','xAxisLineColor':'#cbd0dc','xAxisTickColor':'#cbd0dc','yAxisLineColor':'#cbd0dc','yAxisTickColor':'#cbd0dc','plotColorPalette':'#2fa25a','titleFontSize':18,'xAxisLabelFontSize':14,'yAxisLabelFontSize':14,'xAxisTitleFontSize':14,'yAxisTitleFontSize':14}}}}%%

xychart-beta horizontal

title "Throughput improvement (%)"

x-axis ["1 ProxySQL thread, 1 client", "1 ProxySQL thread, 4 clients", "1 ProxySQL thread, 16 clients", "2 ProxySQL threads, 1 client", "2 ProxySQL threads, 4 clients", "2 ProxySQL threads, 16 clients"]

y-axis "Improvement %" 0 --> 35

bar [29, 30, 31, 18, 27, 27]Throughput gains are strongest under high client concurrency — the exact scenario where lock contention hurts most.

What’s the takeaway?

One of the most reliable performance principles is to keep the hot path lean and let the cold paths do more thinking. Here, Parse got smarter — it now resolves in three tiers: a local fast path that short-circuits repeat prepares with a hash comparison, a global cache lookup under a read lock for statements other sessions have already prepared, and a backend fallback for first-time preparation. Each tier is an early exit, so the extra code costs nothing in the common cases — Parse is actually faster than before, not slower. In exchange, Bind, Describe, and Execute got dramatically faster: a single local lookup with no global synchronization, instead of two map searches and a global lock on every call. Refcounting collapsed from five serialized steps under a write lock into a single atomic instruction. That is the right trade for scalable systems: do more on the cold path, so the hot path does less. It’s one of the oldest tricks in performance work — and still one of the most underused, because the instinct is to make every operation simple, not to make the common one the simplest.