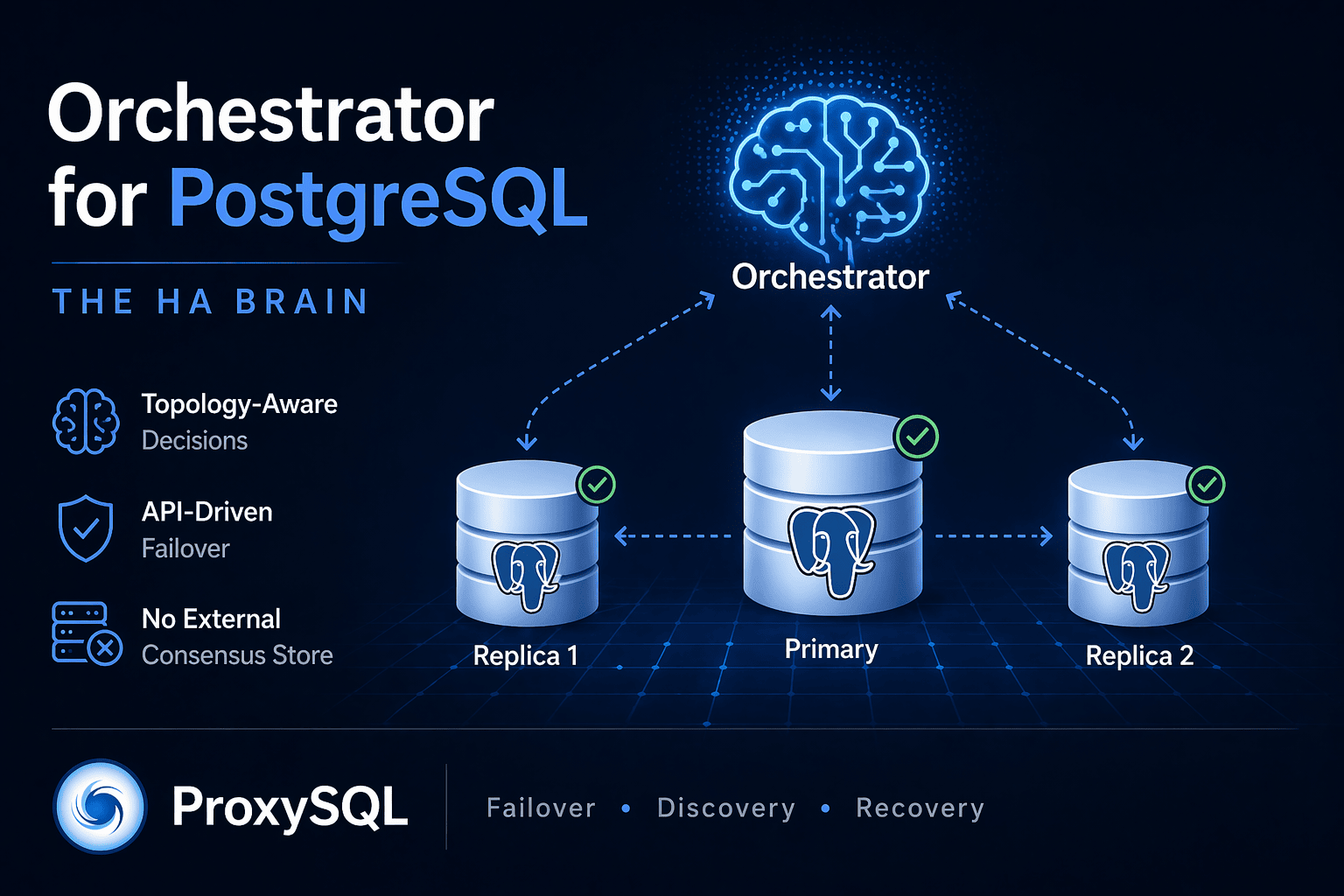

Orchestrator for PostgreSQL: the HA brain, now first-party

Part of the broader ProxySQL for PostgreSQL — the failover model series.

Most PostgreSQL operators we meet have opinions about Patroni. Fewer have opinions about Orchestrator. That’s a historical artefact — Orchestrator was born in the MySQL world at Outbrain, then refined at Booking.com , then became mainstream at Github, and only picked up PostgreSQL support after the ProxySQL team forked it. If you run PostgreSQL and haven’t looked at Orchestrator, there are three reasons to look now: the PG support is real and under CI, it comes from the same people who ship the proxy you already talk to, and — unlike Patroni — it does not require you to deploy and operate an external distributed consensus store (etcd / Consul / ZooKeeper) alongside it.

This post is a short tour. We’ll cover what Orchestrator is, how its mental model differs from Patroni’s, what the minimum working configuration for PostgreSQL looks like, and what Orchestrator deliberately does not do so you know what pieces you still need to bring. A companion post measures the actual unplanned-primary-failure window with Orchestrator and ProxySQL back-to-back.

The fork chain

Orchestrator started in 2014 as a MySQL topology manager,

authored by Shlomi Noach. When Shlomi left GitHub, Percona took over

the public fork and used Orchestrator as the control plane inside

their operator. That fork is stable but not maintained outside the scope of the operator, and it is MySQL-only: pg_is_in_recovery,

pg_promote, and the rest of the PostgreSQL vocabulary were never

part of it.

The ProxySQL team forked Percona’s Orchestrator and added:

- a PostgreSQL provider (

ProviderType: "postgresql") that speaks libpq, readspg_stat_replicationfor topology discovery, callspg_promote()to elect new primaries, and reconfiguresprimary_conninfoon surviving replicas after a failover; - matching CI: every push and pull request runs

test-postgresql.shagainst PostgreSQL 15, 16, and 17 via GitHub Actions. Thefunctional-postgresqljob exercises topology discovery, graceful switchover (happy path and negative cases), and kill-current-primary failover on every commit; - release packaging matched to ProxySQL’s own release cadence, so a deployment can pin both sides to versions that were tested together.

The code is Apache-2.0 licensed; the fork chain is intact; everything upstream that Percona shipped for MySQL still works on the MySQL provider. The PG provider is additive, not a rewrite.

Mental model

Orchestrator is a topology manager. It is not a proxy, not a fencing tool, and not a consensus system. It does three things:

- Discovery. You hand Orchestrator one instance through the API

and it walks the replication graph — with one asymmetry worth

knowing about on the PG side. Walking upward from a standby works

cleanly: the standby’s

primary_conninfocarries the primary’s fullhost:port, so Orchestrator finds the primary by parsing that string. Walking downward from a primary to its replicas readspg_stat_replication, which only gives you each replica’sclient_addrand an ephemeral sourceclient_port— not the replica’s actual listening port. The PG provider falls back toDefaultInstancePort(see the config below) to fill that in, which works fine when every replica does listen on that port (typical containerised or one-per-host deployments), and doesn’t when they don’t. If your PG instances live on mixed ports, seed each of them explicitly via/api/discover. Each instance’s state (primary vs in recovery, read-only or writable, LSN, replica of whom) is recorded in Orchestrator’s own backing database. - Continuous probing. Every

InstancePollSeconds, Orchestrator polls every known instance and updates the record. If an instance stops answering, it is flaggedLastCheckValid=false; if it stays flagged across consecutive probes, Orchestrator emits a replication analysis —DeadPrimary,UnreachablePrimary,DeadIntermediateMaster, etc. - Recovery. For every analysis that matches a

Recover…ClusterFiltersregex, Orchestrator promotes the most-caught-up standby, reconfigures the rest to stream from the new primary, and fires a user-supplied shell-command hook. The hook is where you plug in anything ProxySQL-side (or anything else outside the database layer).

Two things about that model differ from Patroni in ways worth paying attention to.

First, fewer moving parts. Patroni treats each cluster as a set of nodes agreeing on a distributed consensus store (DCS) — in production, usually a 3+ node etcd, Consul, or ZooKeeper cluster. Every Patroni process races to acquire a short-lived leader lease on the DCS, and the DCS’s own consensus algorithm breaks ties. That is a correct design, but it means “deploy Patroni” is really “deploy Patroni and deploy and operate a DCS cluster”: two distributed systems to monitor, upgrade, back up, secure, and page on. A typical production footprint is 3 Patroni nodes + 3 etcd nodes = six processes and two bodies of expertise.

Orchestrator does not need a DCS at all. For its own high availability it uses the Raft consensus algorithm between Orchestrator nodes themselves — three Orchestrator processes talking to each other, no third-party cluster, no extra port to secure, no extra dashboard to watch. For small deployments you can go even lower: a single Orchestrator node with a SQLite backing DB is a legitimate production choice when the HA tool itself is not load-bearing for your tier. The lab in the companion post runs exactly that — one Orchestrator, one SQLite file, three PostgreSQL instances.

The practical consequence is operational: fewer clusters to run and one less piece of software to upgrade in lock-step. If you’re the person who stays up at 02

when the pager goes off, “the HA tool itself has no DCS to be wedged by” is a real feature.Second, API-first and topology-graph-first. Patroni’s primary

abstraction is “which node in this cluster holds the lease.” The

Orchestrator abstraction is a directed graph of replication

relationships across however many clusters you’re managing, with a

REST API over the full graph. That lends itself to good

visualisation (Orchestrator ships a web UI with a live topology

graph), good ad-hoc operations (you can relocate a replica to stream

from a different intermediate primary with one curl), and good

scriptability — the entire control surface is REST.

A minimum working config

Orchestrator is configured via a single JSON file. For a PostgreSQL deployment with a SQLite-backed control database and automatic recovery enabled, the minimum configuration is about a dozen keys:

{

"ListenAddress": ":3098",

"ProviderType": "postgresql",

"PostgreSQLTopologyUser": "orchestrator",

"PostgreSQLTopologyPassword": "orch_pass",

"PostgreSQLSSLMode": "disable",

"DefaultInstancePort": 5432,

"BackendDB": "sqlite",

"SQLite3DataFile": "/var/lib/orchestrator/orchestrator.sqlite3",

"InstancePollSeconds": 1,

"FailureDetectionPeriodBlockSeconds": 5,

"RecoveryPeriodBlockSeconds": 10,

"RecoverMasterClusterFilters": [".*"],

"PostMasterFailoverProcesses": [

"/usr/local/bin/proxysql-rewire-hook.sh"

]

}Keys worth understanding:

ProviderType— the switch that activates the PostgreSQL code path. Without this, Orchestrator will try to speak the MySQL protocol to your instances.PostgreSQLTopologyUser/PostgreSQLTopologyPassword— a PG role with thepg_monitorrole and SUPERUSER (needed forpg_promote()and to reloadprimary_conninfoon surviving replicas). The CI fixture creates it asorchestrator:orch_pass.DefaultInstancePort— set this to 5432. Orchestrator’s history is MySQL; the default in the code is still 3306. If you leave the default and run PostgreSQL instances on non-standard listening ports, the PG provider will fall back to 3306 when it can’t determine a replica’s listening port frompg_stat_replication. The result is noisyDiscoverInstance(...:3306) instance is nillines in the log.InstancePollSeconds— polling cadence. Your failover window’s lower bound is roughly one of these intervals plus a probe round-trip. We use 1 s in the lab. The original upstream default is 5 s, which is reasonable for production at scale.FailureDetectionPeriodBlockSeconds— debounce window for emitting the same analysis twice. Lower means faster failover at the cost of more false positives on transient network blips.RecoverMasterClusterFilters: [".*"]— enables automatic recovery for every discovered cluster. Regex-match againstClusterNamein production to scope auto-recovery to specific tiers.PostMasterFailoverProcesses— an array of shell commands fired after Orchestrator has successfully promoted a replica. Each entry runs with environment variables populated (ORC_FAILURE_TYPE,ORC_FAILED_HOST,ORC_FAILED_PORT,ORC_SUCCESSOR_HOST,ORC_SUCCESSOR_PORT). This is where ProxySQL gets its admin rewrite; more on that in the companion post.

BackendDB: "sqlite" is fine for single-node Orchestrator

deployments, including small production deployments where the HA tool

itself is not load-bearing. For larger clusters, set up a MySQL

backend with its own replication and run Orchestrator in Raft mode

across three nodes — Orchestrator’s HA story for itself.

Discovering the topology

Once Orchestrator is up, you seed it through the API. In the common

case — every instance listens on DefaultInstancePort (5432) —

seeding the primary is enough:

curl -sf http://orch:3098/api/discover/db-primary.example.com/5432Orchestrator reads pg_stat_replication on the primary, picks up

each replica’s client_addr, assumes DefaultInstancePort for the

listening port, and crawls to them. A second or two later you can

ask what it knows:

curl -s http://orch:3098/api/clusters

# ["db-primary.example.com:5432"]

curl -s http://orch:3098/api/all-instances | jq -r '

.[] | "\(.Key.Hostname):\(.Key.Port) RO=\(.ReadOnly) Master=\(.MasterKey.Hostname):\(.MasterKey.Port)"'

# db-primary.example.com:5432 RO=false Master=:0

# db-standby1.example.com:5432 RO=true Master=db-primary.example.com:5432

# db-standby2.example.com:5432 RO=true Master=db-primary.example.com:5432If your instances live on mixed ports on the same host (as in the

lab below, where the three PostgreSQL processes listen on 5433/5434/5435),

seed each one explicitly — one /api/discover/host/port call per

instance. The walk still works going up from a replica (the

replica’s primary_conninfo carries the primary’s full host

DefaultInstancePort to be

right.

The cluster name defaults to the primary’s host:port; you can

override it with a custom ClusterNameToInstancesMap or

ClusterAliasOverride if you want operator-friendly names. The web UI

at http://orch:3098/web displays the same graph visually, with

instance state colour-coded and failover buttons wired to the same

API endpoints.

Everything is a REST call. POST /api/graceful-master-takeover to run

a planned switchover. POST /api/move-below to relocate a replica.

POST /api/begin-downtime to mark an instance down for maintenance

and suppress recovery. The full API is documented in the

repository’s docs/

tree.

What Orchestrator deliberately doesn’t do

Orchestrator is a detect-and-decide layer. It is not:

- A proxy. Client applications do not talk to Orchestrator. When it promotes a new primary, the application has no way to know unless something in the data path changes its routing. That’s where ProxySQL fits.

- A fencing tool. Orchestrator can tell you the old primary is unreachable, and it can refuse to promote until every replica has caught up past a configured LSN. It cannot guarantee the old primary will stay dead. For workloads where split-brain cannot be tolerated, you layer fencing (STONITH, watchdog, cloud-provider force-detach) on top.

- A quorum system for storage. Orchestrator’s own Raft layer is for Orchestrator’s HA, not for the PostgreSQL data plane. Your replication is still plain streaming replication (synchronous or asynchronous, your choice).

This minimalism is a feature. Orchestrator handles the parts that are hard to get right — discovery, decision-making across many clusters, consistent audit trails — and stays out of everything else.

The CI story

Before trusting a new tool with the primary of a production database, most operators want to know how the tool is tested. The short answer for Orchestrator’s PG port: the same GitHub Actions workflow that runs on every commit tests discovery, graceful switchover, and unplanned failover against real PostgreSQL containers, across three major PG versions.

The workflow lives at

.github/workflows/functional.yml

and the PG matrix job is functional-postgresql. Its driver script,

tests/functional/test-postgresql.sh,

runs five test groups:

- Topology discovery (primary + standby)

- API tests

- Graceful primary switchover (happy path)

- Graceful switchover negative cases (refusing to promote a replica with too much lag, etc.) and a round-trip switch-back

- Failover test: kill current primary — stops the primary

container mid-workload, waits for Orchestrator to detect

DeadPrimary, promote the standby, and record a successful recovery in/api/v2/recoveries

Every one of those runs on PG 15, PG 16, and PG 17 for every push and every pull request. When the PG provider ships a config change, you see it fail on the tier where it breaks.

Try it

The easiest way to explore is the lab in the companion post. We will soon publish another blog post where we sets up one primary and two replicas locally, wires Orchestrator and ProxySQL in, and gives you a reproducible kill-primary benchmark on an empty afternoon.

If you’ve been running Patroni and are curious what an API-first-topology-graph HA tool feels like — grab the lab, seed discovery with one curl, and see your cluster light up.

Github repository: Orchestrator