ProxySQL and MaxScale on point select workload

Few days ago MariaDB announced that MaxScale 2.1 is roughly 45% faster than MaxScale 2.0 .

I first want to congratulate MaxScale Team: MaxScale 2.1 seems to have better performance than MaxScale 2.0, great work!

Since MaxScale 2.0 was considerable slower than ProxySQL, I was curious to compare ProxySQL against new MaxScale 2.1.

Reading the article, the first thing that caught my attention was(quoting). “In version 2.1 a session and all its related connections are pinned to a particular worker thread.” .

I didn’t check how this is implemented, but I am sure this is the right way to have a high performance proxy. I think it is the right way because this is at the base of the ProxySQL network core: each worker thread has its own poll(), and this seems to be the best scalable solution (see https://www.percona.com/blog/2016/05/12/proxysql-versus-maxscale-for-oltp-ro-workloads/).

The second thing that caught my attention was the comparison between MaxScale and ProxySQL. But I will come back to this shortly.

Unfortunately there weren’t a lot of details about the benchmark. I am not sure how many people noticed that at 4, 8, 16 and 32 connections the throughput via MaxScale 2.1 is always higher than direct to MySQL . And that from 64 connections onwards still some configurations of MaxScale 2.1 are faster than directly to MariaDB . Is that impossible? It is possible: maybe the bottleneck is in the client doing I/O or MySQL server, but not the proxy. And the proxy layer should be extremely lightweight!

ProxySQL at 4 threads doesn’t seem to match MaxScale, while it does at 8 threads. To me that probably means that CPU is being a bottleneck for ProxySQL at 4 threads, while at 8 threads the bottleneck is probably MariaDB server. And again, at high number of connections the performance of both MaxScale and ProxySQL are higher than direct.

Maybe is the network being saturated? I did some math, at around 330k QPS, network usage should be around 700Mbps: still far from 1Gbps. Is MariaDB becoming a bottleneck? Probably yes, as 64-128 sysbench connections can easily saturate a server.

I think there is something unclear in these graphs, so I decided to try myself.

ProxySQL and MaxScale have very different configuration, therefore it is possible to obtain very different (and sometime misleading) results depending on the configuration.

For this reason I will describe the various configurations.

To avoid that the backends become a bottleneck, I had configured 3 MySQL servers (5.7.17) on 3 different hosts.

Initially I planned to run the benchmark on the the same Group Replication cluster built some weeks ago here, but I couldn’t find how to configure Group Replication on MaxScale (is it supported?) therefore I converted the same cluster into a 1 master + 2 slaves. As all the traffic will be sent to slaves, de facto the active backends are now 2.

sysbench, proxysql and maxscale are all running on a 4th different host. All the servers have 20 CPUs cores, Hyper-Threading off, and 10Gbps network.

MaxScale’s configuration can be found here, while I will list here ProxySQL’s configuration.

Create user:

Admin> INSERT INTO mysql_users (username,password,default_hostgroup)

VALUES ('sbtest','sbtest',1);

Admin> LOAD MYSQL USERS TO RUNTIME;

Query OK, 0 rows affected (0.00 sec)

Admin> LOAD MYSQL SERVERS TO RUNTIME;

Query OK, 0 rows affected (0.00 sec)Configure monitoring user:

Admin> SET mysql-monitor_username='mon';

Query OK, 1 row affected (0.00 sec)

Admin> SET mysql-monitor_password='pass';

Query OK, 1 row affected (0.00 sec)

Admin> SELECT * FROM global_variables WHERE variable_name IN ('mysql-monitor_username','mysql-monitor_password');

+------------------------+----------------+

| variable_name | variable_value |

+------------------------+----------------+

| mysql-monitor_password | pass |

| mysql-monitor_username | mon |

+------------------------+----------------+

2 rows in set (0.01 sec)

Admin> LOAD MYSQL VARIABLES TO RUNTIME;

Query OK, 0 rows affected (0.00 sec)

Admin> SAVE MYSQL VARIABLES TO DISK;

Query OK, 72 rows affected (0.00 sec)Configure servers:

Admin> INSERT INTO mysql_servers (hostgroup_id,hostname,port,max_connections)

VALUES

(1,'10.11.12.42',5717,1100),

(2,'10.11.12.35',5717,1100),

(2,'10.11.12.39',5717,1100);

Query OK, 3 rows affected (0.00 sec)

Admin> SELECT hostgroup_id hid, hostname, port, status, max_connections FROM mysql_servers;

+-----+-------------+------+--------+-----------------+

| hid | hostname | port | status | max_connections |

+-----+-------------+------+--------+-----------------+

| 1 | 10.11.12.42 | 5717 | ONLINE | 1100 |

| 2 | 10.11.12.35 | 5717 | ONLINE | 1100 |

| 2 | 10.11.12.39 | 5717 | ONLINE | 1100 |

+-----+-------------+------+--------+-----------------+

3 rows in set (0.00 sec)

Admin> LOAD MYSQL SERVERS TO RUNTIME;

Query OK, 0 rows affected (0.01 sec)

Admin> SAVE MYSQL SERVERS TO DISK;

Query OK, 0 rows affected (0.00 sec)The benchmark is supposed to be a read-only mode sysbench, using only point selects. For this reason we will create a rule to route all point selects to slaves. Only point selects! Configure query rule, using regular expression:

Admin> INSERT INTO mysql_query_rules (rule_id, active, match_digest, destination_hostgroup, apply) VALUES (1,1,'^SELECT c FROM sbtest. WHERE id=\?,2,1);

Query OK, 1 row affected (0.00 sec)

Admin> SAVE MYSQL QUERY RULES TO DISK;

Query OK, 0 rows affected (0.00 sec)

Admin> LOAD MYSQL QUERY RULES TO RUNTIME;

Query OK, 0 rows affected (0.00 sec)So far we have configured:

- MaxScale Read-Write Service (readwritesplit) on port 4006 and socket /tmp/maxscale_4006.sock

- MaxScale Read-Only Service (readconnroute) on port 4008 and socket /tmp/maxscale_4008.sock

- ProxySQL on port 6033 and socket /tmp/proxysql.sock

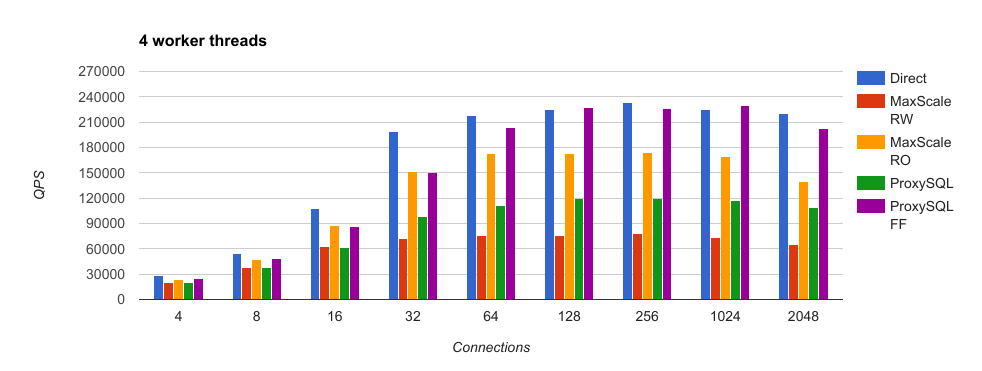

To minimize overhead, sysbench will connect to the proxies via unix socket domain file. The first series of benchmark was executed with both proxies configured with 4 worker threads.

for t in 4 8 16 32 64 128 256 1024 2048 ; do

for s in maxscale_4006 maxscale_4008 proxysql ; do

./sysbench --num-threads=$t --max-time=60 --max-requests=0 --test=./lua/oltp_point_select.lua \

--mysql-user=sbtest --mysql-password=sbtest --oltp-table-size=10000000 --oltp-tables-count=8 \

--report-interval=1 run --mysql-socket=/tmp/$s.sock > results_4/thr"$t"_"$s".txt

done

doneThe output files are available here .

The results were indeed interesting! Up to 16 connections, the throughputs of MaxScale Read-Write and ProxySQL are almost identical, while MaxScale Read-Only is higher. From 32 connections onward, the throughput of ProxySQL is up to 50% faster than MaxScale Read-Write, while the throughput of MaxScale Read-Only is 50% higher than ProxySQL.

Doing benchmarks right isn’t always easy. First, it is important to describe what is being compared, and try not to compare apples and oranges. Second, it is important to understand where is the bottleneck, or results can be misleading.

MaxScale Read-Only Service (readconnroute) is an extremely simple module: after authentication it simply forwards packets from one side of the connection to the other. After authentication, readconnroute is not protocol aware anymore (unless some filter apply): it doesn’t inspect the traffic, but only forward it. ProxySQL has a similar functionality, called Fast Forward. To make a fair comparison, MaxScale readconnroute should be compared to ProxySQL Fast Forward.

Note that I strongly discourage the use of ProxySQL Fast-Forward and MaxScale Read-Only other than in very specific use cases: if you need a proxy that is not protocol aware, most of the time HAProxy will do a better job!

Let’s enable fast_forward in ProxySQL, and run again the benchmark against ProxySQL:

mysql> select * from mysql_users\G

*************************** 1. row ***************************

username: sbtest

password: sbtest

active: 1

use_ssl: 0

default_hostgroup: 0

default_schema: NULL

schema_locked: 0

transaction_persistent: 0

fast_forward: 0

backend: 1

frontend: 1

max_connections: 10000

1 row in set (0.00 sec)

mysql> UPDATE mysql_users SET fast_forward=1, default_hostgroup=2;

Query OK, 1 row affected (0.00 sec)

mysql> LOAD MYSQL USERS TO RUNTIME;

Query OK, 0 rows affected (0.00 sec)The output files are also available here. Putting the results in a graph:

We already noted that normal ProxySQL is up to 50% faster than MaxScale Read-Write.

How does ProxySQL Fast-Forward compare to MaxScale Read-Only? ProxySQL is up to 85% faster than MaxScale!

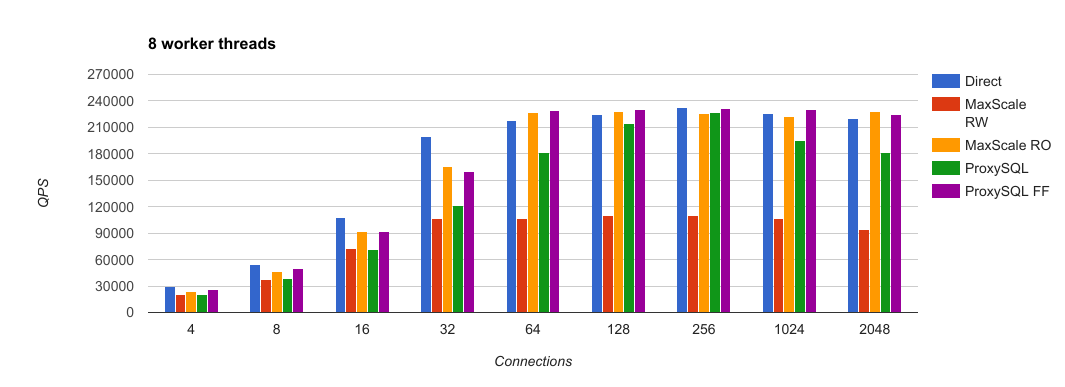

Results with 8 worker threads

Next exercise is to check how ProxySQL and MaxScale behave with 8 worker threads. The output files are available here.

While with 4 worker threads ProxySQL Fast-Forward performs up to 85% better than MaxScale Read-Only, with 8 worker threads they have very close performance and with throughput close to direct connections. This is expected: the bottleneck are now the backends and not the proxies. What is interesting is that at 256 connections ProxySQL provides double throughput than MaxScale Read-Write: 100% more QPS! Between 128 and 256 connections, ProxySQL with 8 worker threads is able to provide the same throughput of direct connections to MySQL! Because at 8 worker threads the bottleneck are not the proxies but the backends, I think that testing 8 threads (or 16) has very little value. You can’t compare the performance of your client/proxy if the backend is the bottlneck, unless your client/proxy isn’t even able to create enough load on the backend.

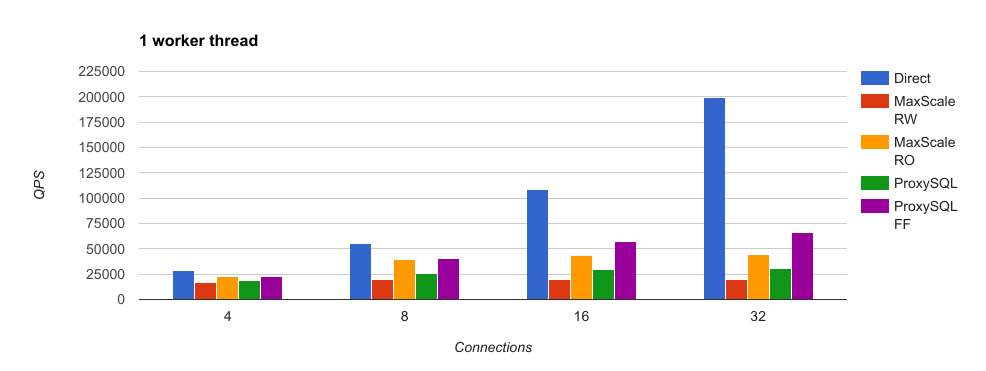

Results with 1 worker threads

As a final test I compared performance with just 1 worker thread, because it is important to understand the performance of the proxies and not of the backends. Performance with just 1 worker thread are indeed low, and expected, yet the trend is confirmed:

- ProxySQL is 50% faster than MaxScale Read-Write Service

- ProxySQL Fast-Forward is 50% faster than MaxScale Read-Only Service

The output files are also available here.

Conclusions

When scalability matters, ProxySQL is still the most performing proxy for MySQL. Even if MaxScale 2.1 is faster than 2.0, its performance are still very poor compared to ProxySQL, at least for point select workload. MaxScale has still a long way to become the best in town. If you need a high-availabilty, scalable, high-performance, and Open Source proxy for MySQL, I invite you to download and try ProxySQL.